Intraobserver Reliability on Classifying Bursitis on Shoulder Ultrasound - Tyler M. Grey, Euan Stubbs, Naveen Parasu, 2023

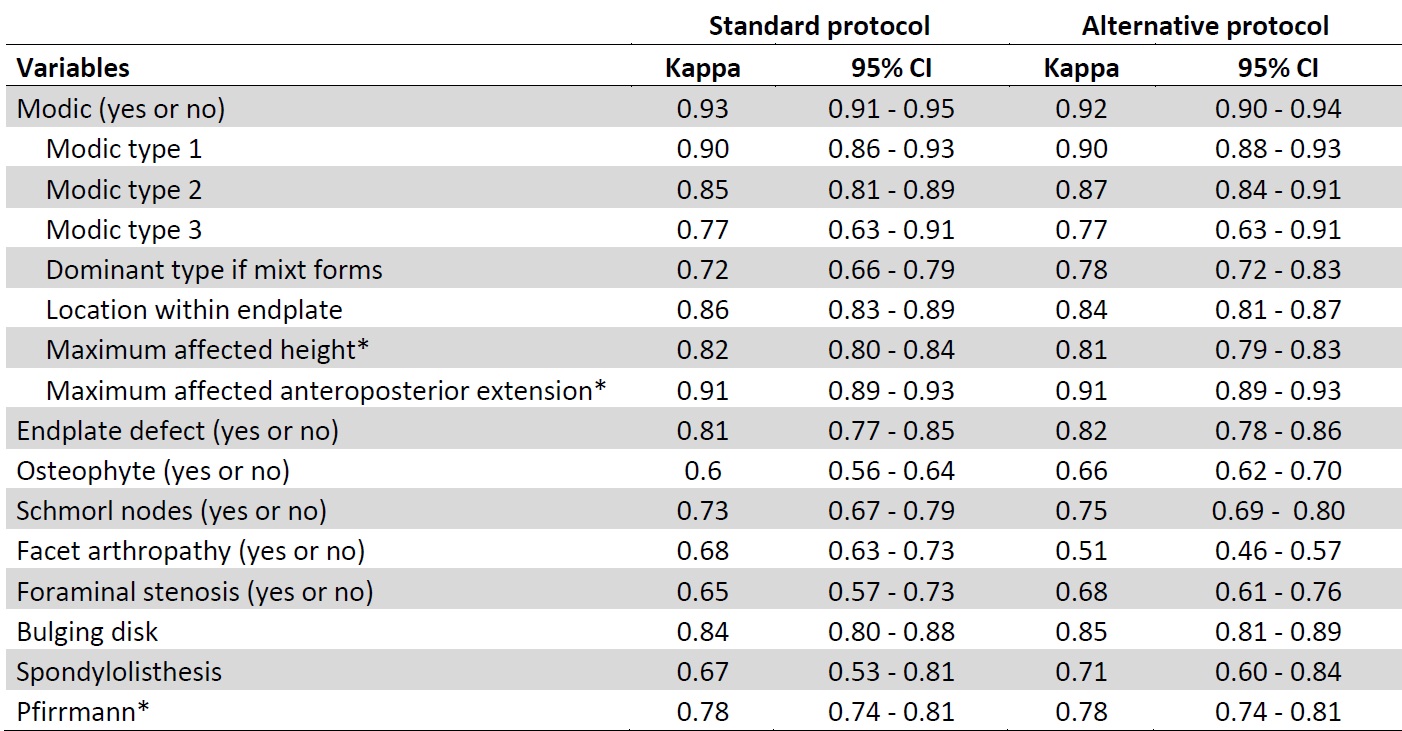

Intra and Interobserver Reliability and Agreement of Semiquantitative Vertebral Fracture Assessment on Chest Computed Tomography | PLOS ONE

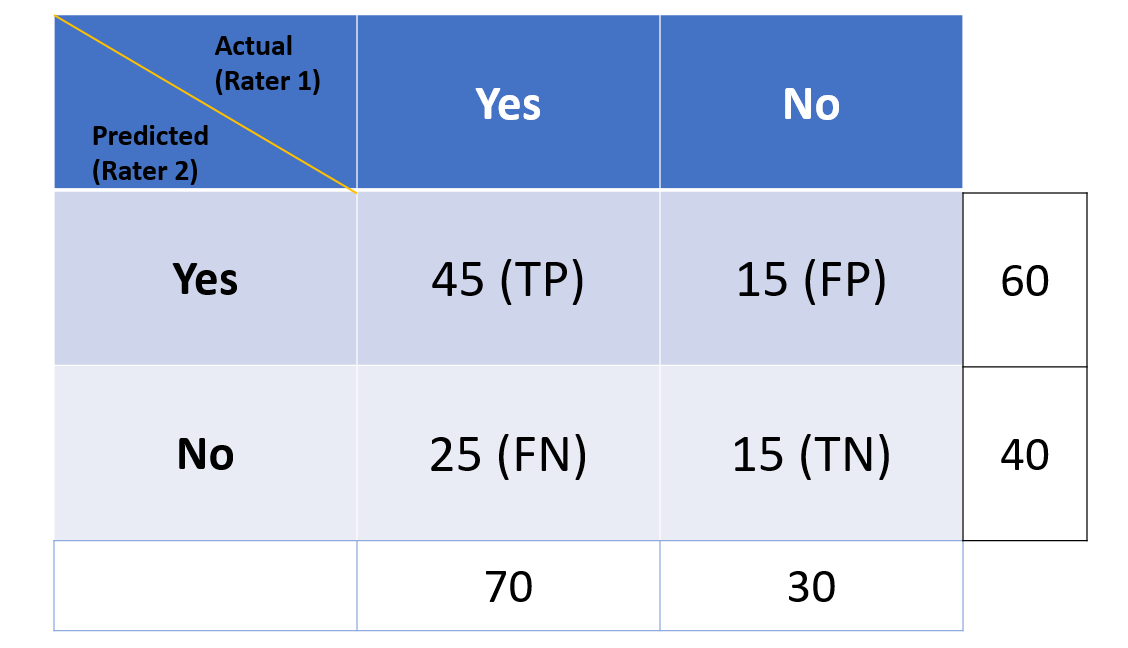

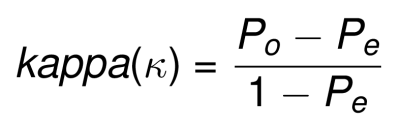

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

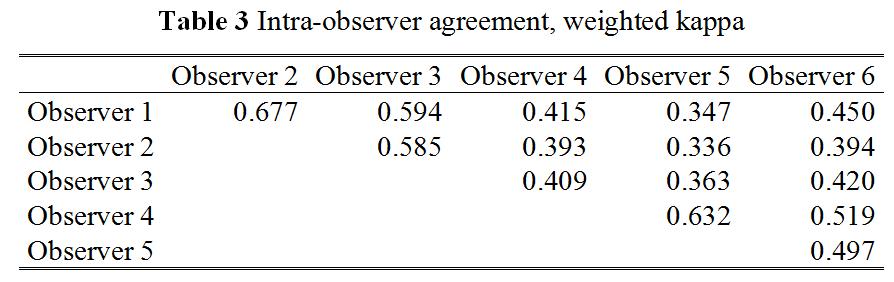

Inter- and Intra-observer Variability in Biopsy of Bone and Soft Tissue Sarcomas | Anticancer Research